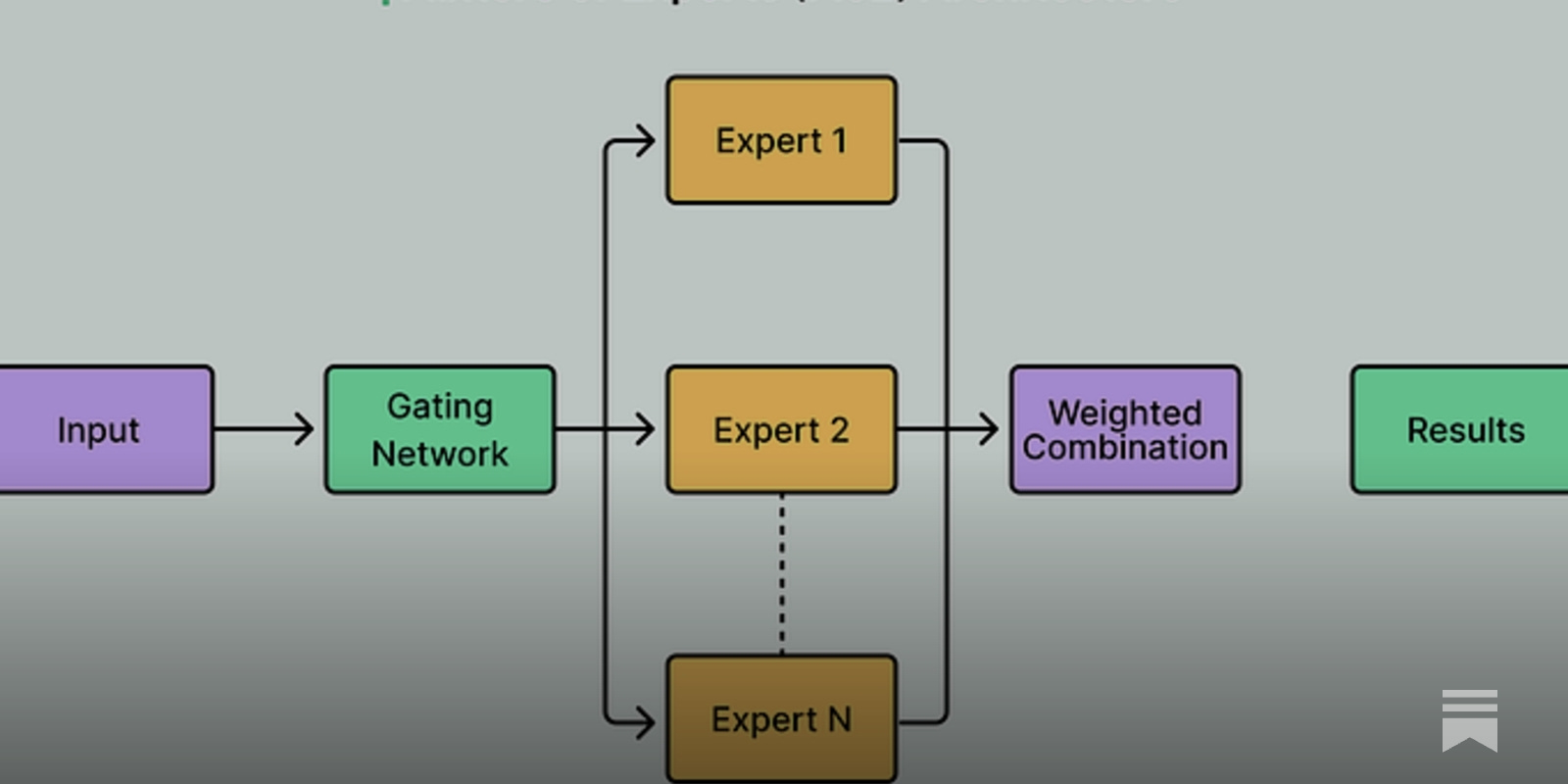

Currently, most frontier open-weight LLMs share a MoE transformer + various attention optimization architecture, and the actual performance difference comes from post-training and infrastructure design

The Architecture Behind Open-Source LLMs

In this article, we will cover various open-source models and the engineering bets that define each one.

https://blog.bytebytego.com/p/the-architecture-behind-open-source

Seonglae Cho

Seonglae Cho