ProLU SAE

Rather than negating the linearity rationale inherent in SAE interpretability itself, it strengthens linearity through nonlinear activation functions. Specifically, unlike ReLU, ProLU's derivative with respect to bias is undefined, so to resolve this, composite differentiation is introduced and the bias is designed to only perform noise suppression instead of simply translating the input in parallel

ProLU: A Nonlinearity for Sparse Autoencoders — AI Alignment Forum

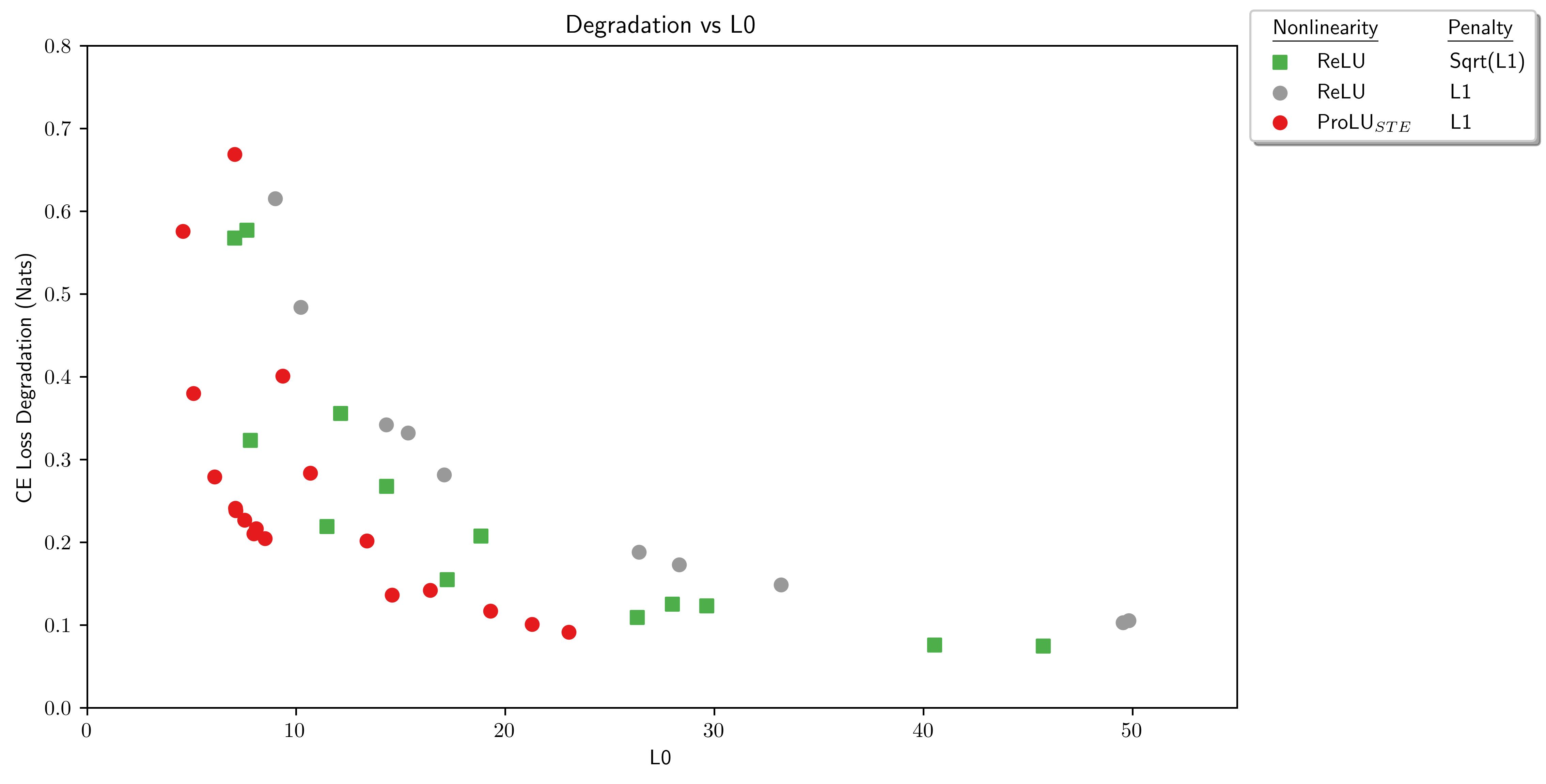

Abstract This paper presents ProLU, an alternative to ReLU for the activation function in sparse autoencoders that produces a pareto improvement over…

https://www.alignmentforum.org/posts/HEpufTdakGTTKgoYF/prolu-a-nonlinearity-for-sparse-autoencoders

Seonglae Cho

Seonglae Cho