open-source inference serving software

Triton Inference Usages

Document

Triton Inference Server — NVIDIA Triton Inference Server

Getting Started

https://docs.nvidia.com/deeplearning/triton-inference-server/user-guide/docs/index.html

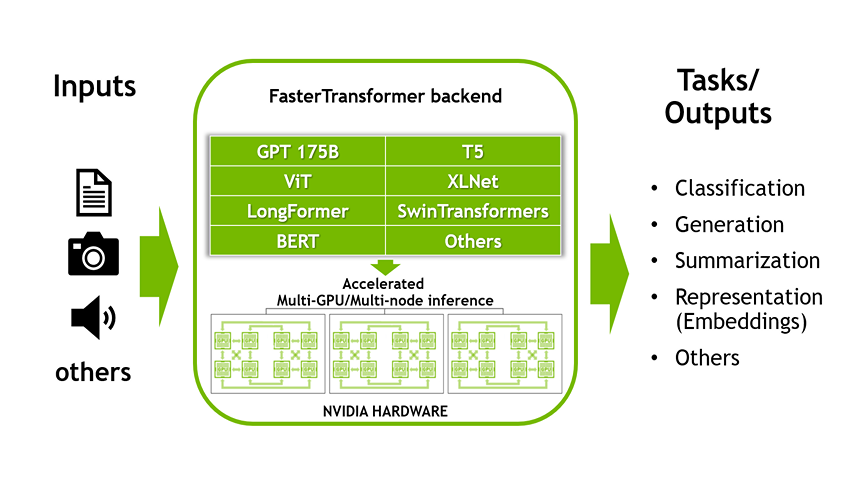

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server | NVIDIA Technical Blog

Learn about FasterTransformer, one of the fastest libraries for distributed inference of transformers of any size, including benefits of using the library.

https://developer.nvidia.com/blog/accelerated-inference-for-large-transformer-models-using-nvidia-fastertransformer-and-nvidia-triton-inference-server/

Seonglae Cho

Seonglae Cho