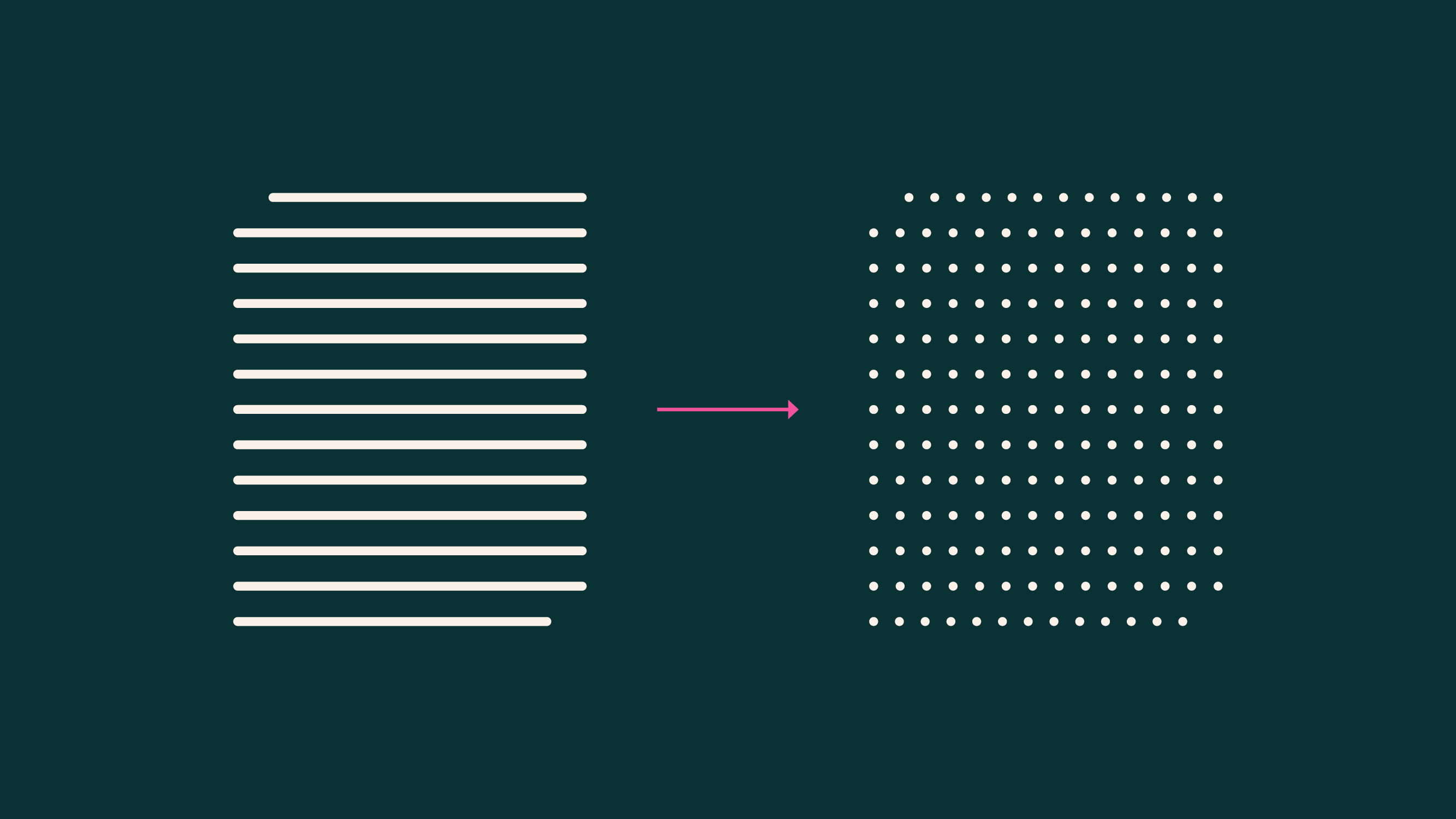

When using UTF-8 encoding, each character is represented by 1-4 bytes, and each byte value is treated as a single token ID.OOV token Problem Solving: Since everything can always be represented at the byte level, even when unregistered words or neologisms appear, OOV problems fundamentally do not occur. This helps reduce model size as it doesn't require a very large vocabulary dictionary.

Bolmo

Introducing Bolmo: Byteifying the next generation of language models | Ai2

Bolmo is new a byte-level family built by adapting Olmo 3 into a fast, flexible byte-based model with a short extra training run.

https://allenai.org/blog/bolmo

Seonglae Cho

Seonglae Cho