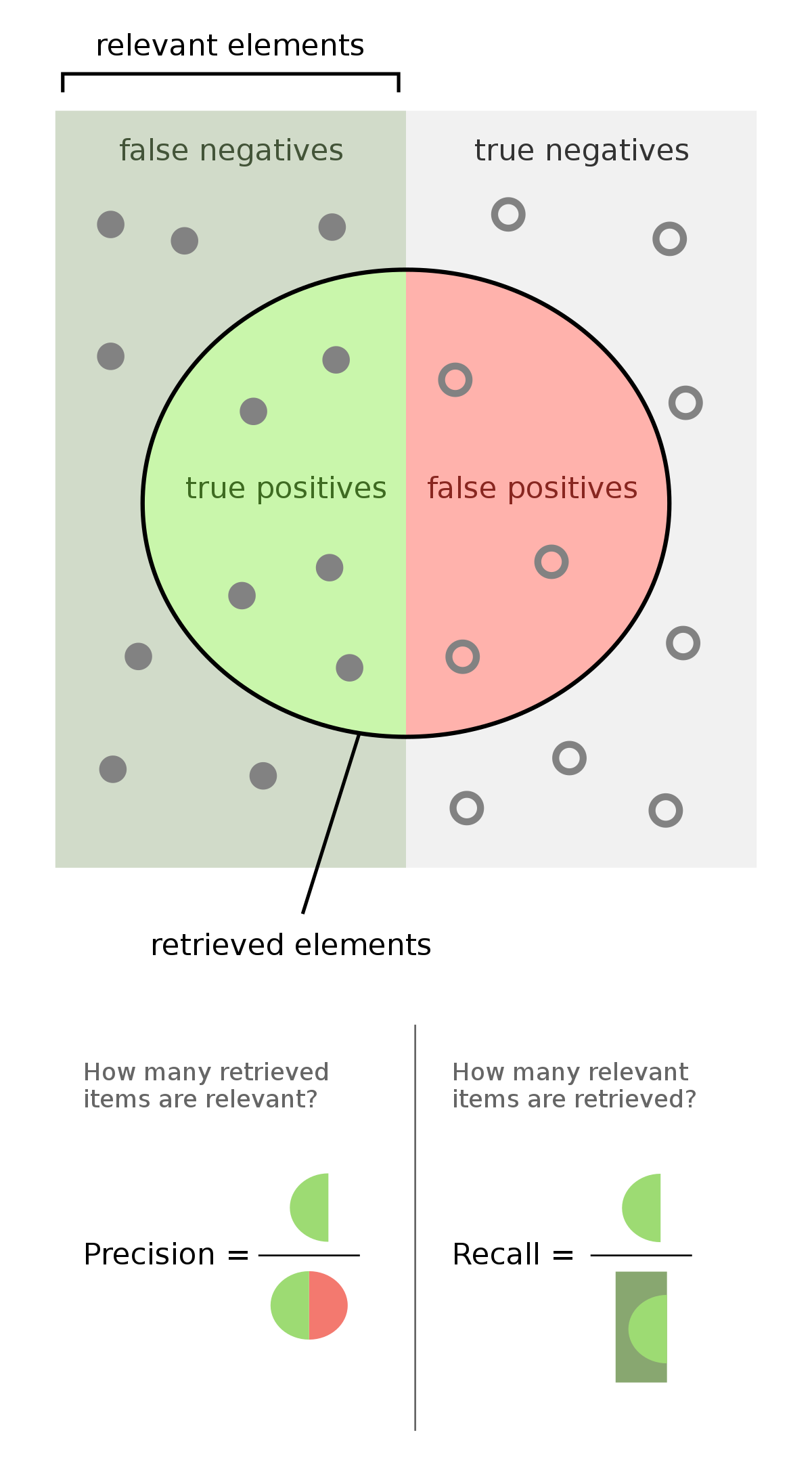

- High Recall score means less false negative

- High Precision means less false positive

Recall Precision Curve

Max Precision observed at Recall R or higher.

The precision-recall curve is dataset dependent, particularly on positive labels, because the true positive rate at threshold 0 cannot be exactly 0 but is bounded by the ratio of positive labels to the total number of samples in the dataset.

Mean Average Precision (MAP)

- More weight to the top items

- Only binary relevance

Most standard among the TREC community is MAP which provides a single-figure measure of quality across recall levels.

Precision and recall

A precision-recall curve plots precision as a function of recall; usually precision will decrease as the recall increases. Alternatively, values for one measure can be compared for a fixed level at the other measure (e.g. precision at a recall level of 0.75) or both are combined into a single measure

https://en.wikipedia.org/wiki/Precision_and_recall#:~:text=A%20precision%2Drecall%20curve%20plots,combined%20into%20a%20single%20measure

Seonglae Cho

Seonglae Cho