Diffusion Hyperfeatures

arxiv.org

https://arxiv.org/pdf/2305.14334

Diffusion Hyperfeatures: Searching Through Time and Space for Semantic Correspondence

Diffusion Hyperfeatures is a framework for consolidating the multi-scale and multi-timestep internal representations of a diffusion model for tasks such as semantic correspondence.

https://diffusion-hyperfeatures.github.io/

Readout Guidance

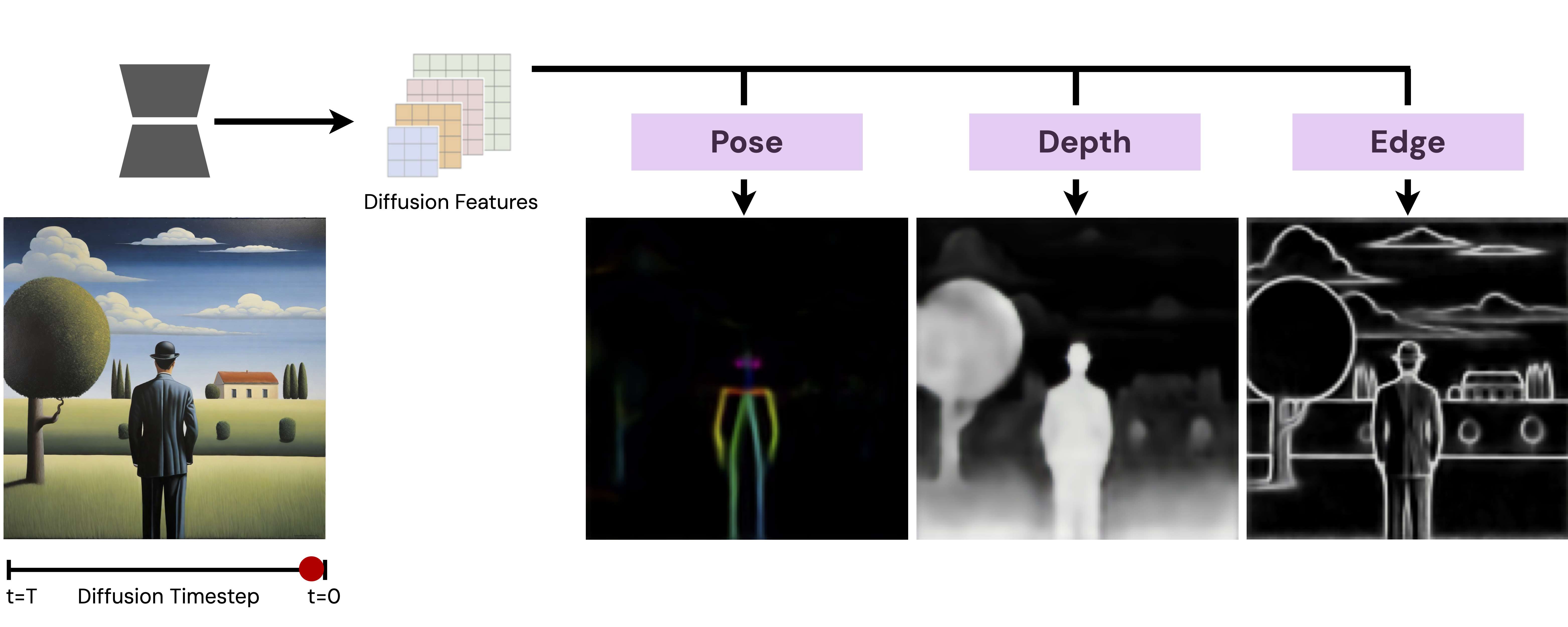

Readout Guidance conditions image generation using a control signal learned from diffusion-model features. It leverages internal diffusion-model features so that a lightweight readout head can enable a wide range of conditional controls. The readout head architecture extends Diffusion Hyperfeatures: it takes intermediate features from a frozen U-Net decoder, applies a learned projection layer and bilinear upsampling, and then computes a weighted average.

As an extension of classifier guidance, it replaces classification-based guidance with regression-based guidance. The guidance update rule is , where is the learned readout head, is a distance function, and is the guidance weight. For relative attributes between two images, it instead compares against a reference image readout . The spatially aligned head is trained with pixel-wise MSE loss; the correspondence feature head is trained with a contrastive loss using symmetric cross-entropy; and the appearance similarity head uses video data with anchor–positive–negative triplets and a cosine-distance hinge loss with margin 0.5.

arxiv.org

https://arxiv.org/pdf/2312.02150

Readout Guidance: Learning Control from Diffusion Features

We train very small networks called readout heads to predict useful properties and guide image generation.

https://readout-guidance.github.io/

Seonglae Cho

Seonglae Cho