AI Optimization, Inference Optimization

Fast inference but usually no API access to hidden states

Model Inference Tools

AI Server

Model Inference Servers

AI Inference Notion

AI Performance Libraries

LLM inference cost is going down fast

Welcome to LLMflation - LLM inference cost is going down fast ⬇️ | Andreessen Horowitz

For LLM of equivalent performance, the inference cost is decreasing by 10x every year. What cost $60/million tokens in 2021 costs $.06/million tokens today.

https://a16z.com/llmflation-llm-inference-cost

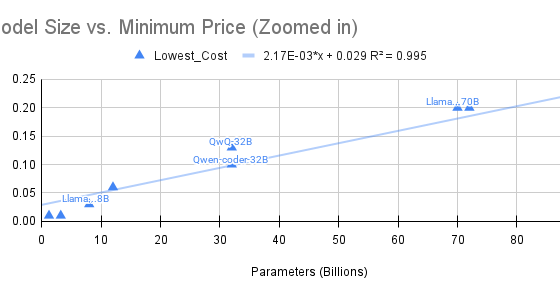

Inference cost with model size

Observations About LLM Inference Pricing — LessWrong

This work was done as part of the MIRI Technical Governance Team. It reflects my views and may not reflect those of the organization. …

https://www.lesswrong.com/posts/mRKd4ArA5fYhd2BPb/observations-about-llm-inference-pricing

Seonglae Cho

Seonglae Cho