Dialogue System

Human should focus on composing and organizing information not providing answer

Because AI is much better at providing information and give answers

Anyway, the final form of AI product would be Communication form

Helpfulness, Expertise, Transparency, Clarity, Thoroughness

Think LLMs as simulators but as entities. "What do you think about xyz"? There is no "you". Next time try: "What would be a good group of people to explore xyz? What would they say?"‣.

Current transformer-based LLMs clearly possess a usable level of intelligence, but they don't have identity like humans do. Due to the vast amount of training data, numerous intelligences or identities are overlapped, which is why inconsistent results occur. This is actually an advantage from a simulation perspective - when conversing with an LLM, if you think of it as an entity, you might get angry or emotional, but if you view it as prompt simulation, you won't.

Chat AI Services

Chat Group Services

Dialogue System Notion

Model autoselection

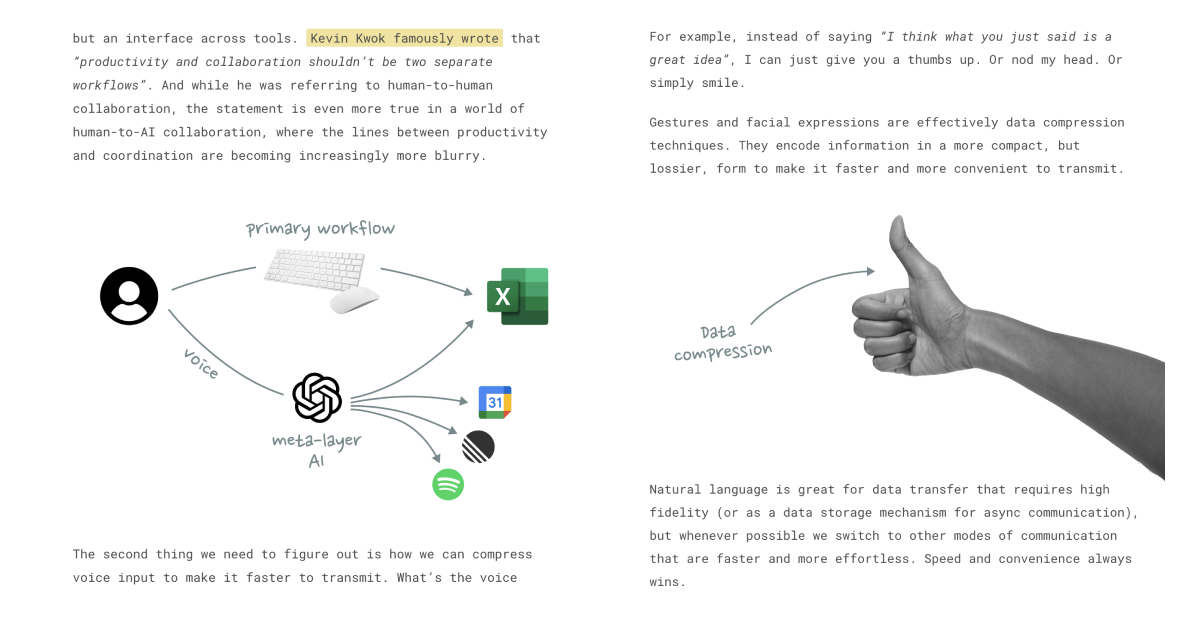

The Great AI UI Unification

ChatGPT starts cleaning up the cruft...

https://spyglass.org/chatgpt-ai-ui/

Performance

Chat with Open Large Language Models

https://chat.lmsys.org/

Chatbot Arena Leaderboard - a Hugging Face Space by lmsys

Discover amazing ML apps made by the community

https://huggingface.co/spaces/lmsys/chatbot-arena-leaderboard

Stats

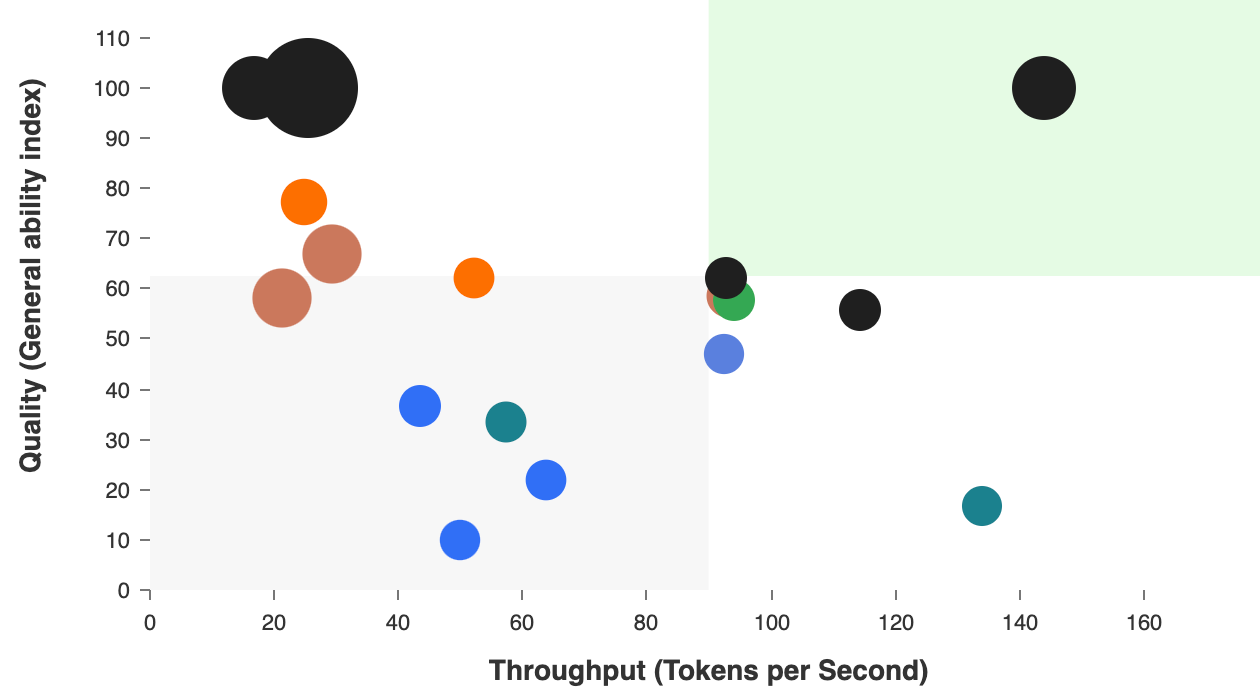

Model & API Hosts Analysis | Artificial Analysis

Comparison and analysis of AI models and API hosting providers. Independent benchmarks across key metrics including quality, price, performance and speed (throughput & latency).

https://artificialanalysis.ai/

How Are Consumers Using Generative AI? | Andreessen Horowitz

To see how people are interacting with generative AI, we used data to rank the top 50 GenAI web products by monthly visits.

https://a16z.com/how-are-consumers-using-generative-ai

AI Personality

Claude’s Character \ Anthropic

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

https://www.anthropic.com/research/claude-character

Using Crosscoder for chat Model Diffing reveals issues with traditional L1 sparsity approaches: many "chat-specific features" are falsely identified because they are actually existing concepts that shrink to zero in one model during training. Most chat-exclusive latents are training artifacts rather than genuine new capabilities.

Complete Shrinkage → A shared concept where one model's decoder shrinks to zero. Latent Decoupling → The same concept is represented by different latent combinations in two models.

Using Top-K (L0-style) sparsity instead of L1 reduces false positives and retains only alignment-related features. Chat tuning effects are primarily not about capabilities themselves, but rather: safety/refusal mechanisms, dialogue format processing, response length and summarization controls, and template token-based control. In other words, it acts more like a shallow layer that steers existing capabilities.

arxiv.org

https://arxiv.org/pdf/2504.02922

Seonglae Cho

Seonglae Cho